Posted by EditorDavid from the judgment-day dept.

America’s National Institute of Standards and Technology (NIST) has released a new open-source software tool called Dioptra for testing the resilience of machine learning models to various types of attacks.

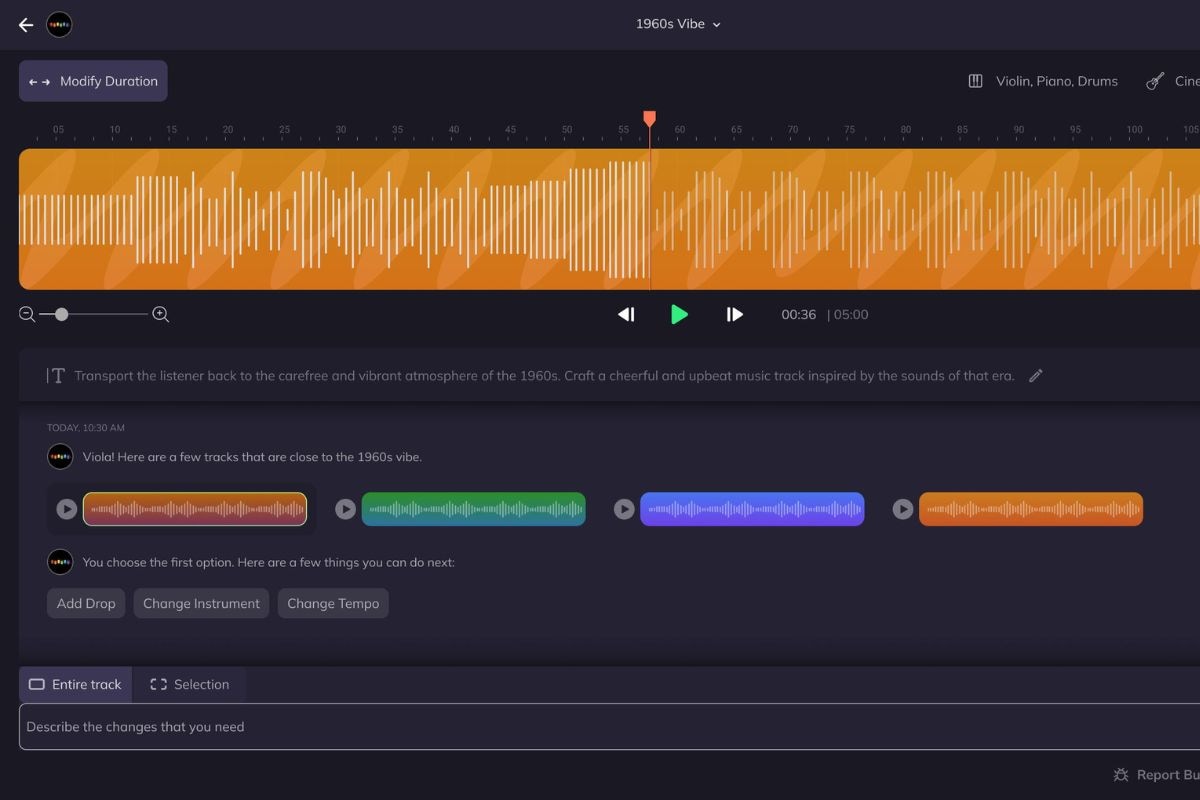

“Key features that are new from the alpha release include a new web-based front end, user authentication, and provenance tracking of all the elements of an experiment, which enables reproducibility and verification of results,” a NIST spokesperson told SC Media: Previous NIST research identified three main categories of attacks against machine learning algorithms: evasion, poisoning and oracle. Evasion attacks aim to trigger an inaccurate model response by manipulating the data input (for example, by adding noise), poisoning attacks aim to impede the model’s accuracy by altering its training data, leading to incorrect associations, and oracle attacks aim to “reverse engineer” the model to gain information about its training dataset or parameters, according to NIST.

The free platform enables users to determine to what degree attacks in the three categories mentioned will affect model performance and can also be used to gauge the use of various defenses such as data sanitization or more robust training methods.

The open-source testbed has a modular design to support experimentation with different combinations of factors such as different models, training datasets, attack tactics and defenses. The newly released 1.0.0 version of Dioptra comes with a number of features to maximize its accessibility to first-party model developers, second-party model users or purchasers, third-party model testers or auditors, and researchers in the ML field alike. Along with its modular architecture design and user-friendly web interface, Dioptra 1.0.0 is also extensible and interoperable with Python plugins that add functionality… Dioptra tracks experiment histories, including inputs and resource snapshots that support traceable and reproducible testing, which can unveil insights that lead to more effective model development and defenses.

NIST also published final versions of three “guidance” documents, according to the article. “The first tackles 12 unique risks of generative AI along with more than 200 recommended actions to help manage these risks. The second outlines Secure Software Development Practices for Generative AI and Dual-Use Foundation Models, and the third provides a plan for global cooperation in the development of AI standards.”

Thanks to Slashdot reader spatwei for sharing the news.

You are always doing something marginal when the boss drops by your desk.

Working…